Various updates have been made to google search console error and How To structured data. Impact. These number of errors, issues, and other metrics may impact Google’s enhancement evaluates and reports errors related to Breadcrumbs and How To structured data.

Changing only the report. Due to this reporting change, you do not have to worry about your rich Google search results being visible.

Next steps. If your site uses Breadcrumbs or How To structured data, it is recommended that you review the reports in Google Search Console and address the updated errors and issues. It is possible to see a change in severity and a difference in the number of Breadcrumbs and How To entities and issues reported for your property.

However, in this blog, we will discuss all the new error reporting in the google search console.

Console for Google Search

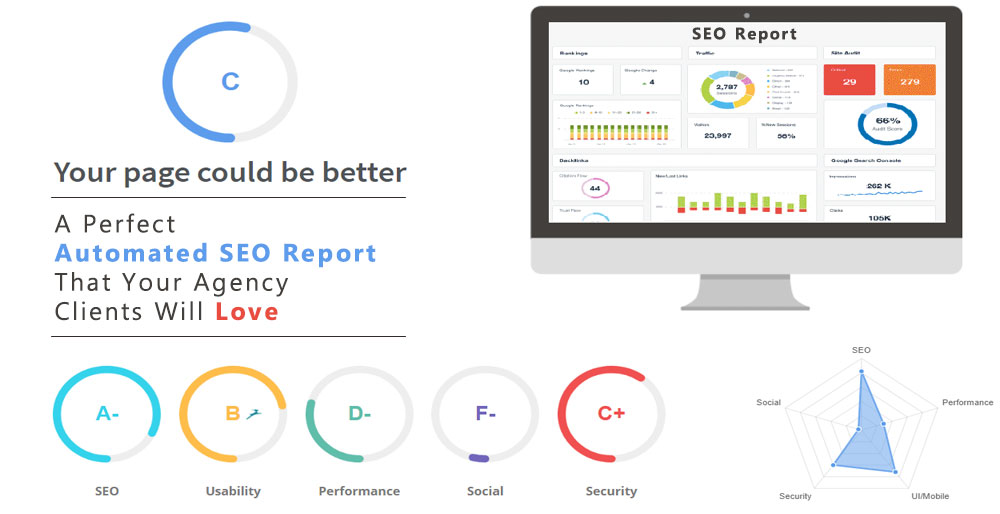

This free tool lets you identify and troubleshoot search engine crawling and indexing issues encountered by Google Search Console. Additionally, it can give you insight into which pages are ranked higher and which are not.

A brief overview of google search console errors is provided in the video below:

Search Console: How to Get Started

Verification of domains

In the google search console error, you should verify your website’s ownership if you haven’t already. We highly recommend you complete this step because it allows you to view all of your site’s subdomains.

There is a good chance you will miss out on some vital information if you do not verify each version of the website.

Reporting index coverage: navigating the page

You can start with any property once you have verified your website.

Your website may not rank higher in search results because of technical problems. Issues can be classified as Error, Valid with a warning, Valid, or Excluded.

Following the issue resolution workflow in the tool, you should inform Google once you think the issue has been resolved. Towards the end of the blog, we outline the steps for doing this.

Errors listed in google search console error

If Google bot encounters an issue while crawling your website and doesn’t understand one of your pages, it gives up and moves on. In this case, your page won’t be indexed and won’t be visible to search engines, which will significantly affect your search engine rankings.

A few of these errors are listed below:

- Error 5xx on the server

- Error redirecting

- txt blocks this website

- Indexed as ‘noindex’

- 404 Not Found

- Request that was not authorized (401)

- A 404 error has occurred.

- Issues with crawling

Start here by focusing your efforts.

Here’s how to fix a server error (5xx):

When the page was requested, you received a 500-level error from your server.

A 500 error indicates that a website’s server cannot fulfill your request due to some problem. Google could not load the page due to a problem with your server.

You should check your browser to see if the page can be loaded. The issue may have been resolved on its own, but you should confirm.

If your website has been experiencing outages in recent days, or if there’s a configuration that may be preventing Google bot from accessing your site, email your IT team or hosting company.

Redirect Error: How To Fix It:

There is a problem with your redirect, so it doesn’t work. It needs to be fixed!

Most websites have had their primary URL changed a few times, so redirects are used to redirect to redirects. It does not like crawling these links because Google has to crawl a lot of content. Eliminate all steps in the middle by directing your redirect directly to the final URL.

Noindex URL submitted:

Google receives mixed signals from you. “Do not index me!” Check your page’s source code and look for the word “noindex”. Find a way to modify the page’s code directly if you see it, or go into your CMS and find a setting that removes it.

X-Robots-Tags can also be used in HTTP header responses to noindex a page, but it’s harder to spot if you’re unfamiliar with developer tools. More information can be found here.

The URL submitted has a crawl issue:

A problem prevented Google from downloading and rendering your page’s content fully. Use Fetch as Google as recommended, and check if the page appears differently when you load it in your browser than what Google renders.

This could be because JavaScript is essential in loading your content. Even Google doesn’t understand JavaScript perfectly, and most search engines ignore it. Additionally, blocked resources and a long page load time may be to blame.

Warnings listed by Google Search Console

errors to warnings are more serious, but you should still pay attention. If you resolve the signs that google search console error uncovers, you may increase the likelihood that your content will be indexed and improve your ranking.

Robots.txt blocks indexing:

A robots.txt block prevented the site from being indexed

As a result, we often see this particular warning. A lousy bot is usually blocked by overly strict rules when trying to stop it.

In the example below, a local music venue blocks all crawlers, including Google, from accessing its website. It will be communicated to them. Don’t worry.

Robots are blocking this page

As a result of Google’s inability to see the Title Tag, Meta Description, or content of the page, the snippet was less than optimal.

Is there a way to fix this? Usually, this warning occurs when both disallow and noindex commands are present in your robots.txt file and the HTML of the page. These pages will be dropped from search engine indexes once crawlers see the noindex directive.

List of valid URLs in Google Search Console

You can use this to determine what part of your site is healthy. Listed here are pages that Google has crawled and indexed successfully. We will still explain each status, even though they are no issues.

Indexed and submitted:

The URL was indexed after you submitted it for indexing.

Google dug the page you told them about, so you told them about it. Now that you’ve gotten what you wanted toast yourself with champagne!

Sitemap not submitted, but indexed:

In doing so, Google may crawl your content more frequently, translating into higher rankings and more traffic for your website.

Mark as canonical; indexed:

Accessing the same page through multiple variations through a duplicate URL is possible.

They are bad for SEO since they dilute any authority a page may have gained through external back-links. As well as making your analytics reporting pretty messy, it wastes a search engine’s resources crawling multiple URLs for a single page.

Page removal tool blocked:

A URL removal request is currently blocking the page.

Using Google’s page removal tool, someone at your company requested that this page be removed. If you wish to keep blocking access to the page, consider deleting it and allowing it to return a 404 error, or requiring a login. It may be indexed again by Google if it is not indexed already.

An unauthorized request was blocked (401):

In building a website, it is common to link to staging sites without updating the links once the site goes live.

Make sure these URL inspection tools to fixed on your site. You may need help from your IT department for a site with many pages. You can scan your site bulk with crawling tools through iMetaDex.

Crawled – not indexed yet:

Google crawled but did not index the page. There is no need to resubmit this URL for crawling; it may or may not be indexed in the future.

If Check your content carefully if you see this. Answers the searcher’s question? What is the accuracy of the content? Are you providing your users with a good experience? Is the source of your links reputable? Does anyone else link to it? When Google crawls the page next time, optimizing it may increase its chances of being indexed.

What’s the problem with my content not being indexed? Here are four questions you should ask yourself

The following page is duplicated but not in HTML:

Non-HTML pages (such as PDF files) are duplicates of other pages marked as canonical by Google.

The PDF document on your site contained the same information as the HTML version, so Google only indexed the HTML file. Since this is generally what you want, you shouldn’t need to take any action unless you prefer the PDF version.

The canonical is different between Google and the user, duplication:

Google thinks another URL would make a better canonical for this set of pages.

It’s common for websites to specify one version of a page as the canonical version, but then redirect the user to another. It overrides your directive because Google cannot access the specified version.

Redirected page:

Due to its redirect nature, the URL was not indexed.

If Links to old URLs that redirect to new ones will still be detected by Google and included in the Coverage Report. Whenever you use an old URL, consider updating it so search engines won’t need to redirect to find your content.

A queue for crawling has been created:

It’s in the crawling queue; you’ll know if it’s been crawled in a few days.

I’m glad to hear this! Your content will soon appear in search results. I’m going to microwave some popcorn and check back in a few minutes. The index coverage report will help you identify all the other pesky issues you need to fix.

A soft 404 occurs:

The page request returns a soft 404 response.

Based on Google’s perspective, these pages are nothing more than shells. It is the remains of a functional but no longer existing activity. These pages should be converted into 404 pages, or they should be populated with valuable content.

Mobile Usability Report: How To Navigate

Since mobile devices account for an increasing portion of web traffic and daily searches, Google and other search engines have increased their focus on mobile usability when ranking pages.

You can use Google Search Console’s Mobile Usability report to identify issues with mobile-friendliness that may be hindering your website’s organic traffic.

“Device-width” is not set in the viewport.

The viewport is fixed-width, so it cannot adjust for different screen sizes. Your site’s pages need to be responsively designed, and the viewport should be set to complement the device’s width and scale.

Screen width greater than content

This usually happens when an image or element on your page does not resize properly for mobile devices. Simply removing the part or photo that does not size appropriately on mobile devices will fix this issue. Making the element responsive requires you to modify your code.

I can’t read the text.

Mobile visitors would have to pinch to zoom in to read the page because the font size is too small. Mobile devices have a hard time reading your website. Alternatively, you can load up the page on your smartphone and experience this for yourself.

Conclusion

Our product iMetaDexTM offers a comprehensive diagnosis and solution regarding online ranking and visibility. One click is all it takes to set up our patented algorithm, which is entirely automated. Our change management cycle also includes a continuous feedback loop.

To learn more about iMetaDex™, click here.

MetaSense Marketing Management Inc.

866-875-META (6382)

support@metasensemarketing.com